Langston,

Cognitive Psychology, Notes 13 -- Reasoning and Decision Making

I. Goals.

A. Where we are/themes.

B. Logic.

C. Heuristics.

D. Probability.

II. Where we are/themes. In this unit we'll turn

our attention to some of the tasks that people think of when they think

cognitive psychology. How do you think and reason? My main

focus will be on problems in thinking (problems that arise due to the

unfortunate

interaction of normal cognitive activities). There are three

areas

we'll consider:

A. Logic: How people fail to use valid logic when thinking

(just a taste of this, but it fits nicely with the other areas where

we've

seen formal logic run into trouble as a model of cognition).

B. Heuristics: How little short-cut rules lead to problems

when applied to reasoning.

C. Probability: How a lack of appreciation for simple

concepts

from probability can lead people to make bad decisions.

By the end of the unit, you should have some idea of the way people

think, and how the way people think can lead to problems.

Top

III. Logic. We'll just consider one aspect of

logic:

Conditional reasoning.

A. The situation is this: You have a hypothesis (an if-then

statement) and you want to test it. Here's an example from your

text:

If there's fog, then the plane will be diverted. How can we

determine if this statement is true? There are four tests we can

perform:

1. Present a situation where there's fog.

2. Present a situation where there's no fog.

3. Present a situation where the plane's been diverted.

4. Present a situation where the plane has not been diverted.

For each of these tests, we could get a particular outcome and then

determine whether or not the if-then statement is true. Let's

have

some examples. For each of the four situations described below,

write

T or F to indicate whether this evidence leads you to conclude that the

hypothesis is true or false:

1. Present a situation where there's fog and find that the plane

is diverted.

2. Present a situation where there's no fog and find that the

plane is diverted.

3. Present a situation where the plane's been diverted and find

that there's no fog.

4. Present a situation where the plane has not been diverted

and find that there's no fog.

Here's the catch: Only two of these tests involve valid logic

(so only two of these tests can have any bearing on whether or not the

if-then statement is true). That means that the correct answer to

two of the problems above is "I don't know." You probably didn't

write that. So, go back now and identify which two will have no

bearing

on the if-then statement.

You probably had a hard time doing that. The correct answers

are:

1. T

2. I don't know

3. I don't know

4. T

Why do I say this? There are technical reasons based on logic,

and those are presented as clearly as I can present them in my research

methods notes. But, I think we can think our way through

it.

In order for statement 2 to be a valid test I would have to assume that

the only way a plane could be diverted is if there's fog. That is

obviously not true. Lots of reasons could cause a plane to be

diverted.

For statement 3, I would have to make the same assumption. In

reality,

neither of those tests has any bearing on the if-then statement.

Contrast that with statement 1. Is fog a sufficient reason to

divert

a plane? Given the results of our test, the answer is yes.

In 4, we're also testing whether fog is sufficient to lead to a

diverted

plane.

To put it another way, in the if-then statement I said fog will cause

the plane to be diverted, I didn't say the only way for the plane to be

diverted was for there to be fog.

B. Problems: People aren't very good at conditional logic

problems. Part of the trouble comes from the seductive nature of

the invalid logic. For example, imagine I've provided the

hypothesis

if you smoke, then you will get cancer

If someone says: "That can't be true, my uncle got cancer and

he never smoked" or that person says "That's not true, my uncle never

smoked

and he got cancer" those could both sound like good arguments if you're

not thinking carefully. But, again, I only said if you smoke,

then

you'll get cancer. I didn't say the only was to get

cancer

was to smoke. People who get cancer aren't really who I'm talking

about, I'm talking about people who smoke. One thing that

contributes

to making the invalid logic seductive is illicit conversion. That

happens when people reverse the if and then parts (so it would be

something

like "if you get cancer, then you smoked"). Obviously, once you

turn

the parts around, the "tests" presented above about our hypothetical

uncle

become valid. The important thing to remember is that the order

of

the parts in the if and then are not arbitrary, and that order has to

be

maintained.

Confirmation bias is a special problem for conditional reasoning.

People are much more likely to attempt to confirm their hypotheses than

they are to disconfirm them. That leads to a lot of errors in

conditional

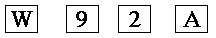

reasoning. Try this problem: There are four cards

below.

If there's a letter on one side, there's a number on the other.

Which

two should you choose to test "If there's a vowel on the front, then

there's

an even number on the back?"

The correct two cards are "A" and "9." Some data from your book

(I'm guessing this is Wason & Johnson-Laird, 1972): 33% turn

over only "A." That shows a confirmation bias because A is a

vowel,

if there's an even number on the back, it confirms the rule. An

additional

46% turned over "A" and "2." This is an error because 2 has no

bearing

on the situation (it is a sort of illicit conversion, I never said even

numbers needed vowels on the other side). However, choosing 2

does

fit a confirmation bias. It's like people think something along

the

lines of "If there's a vowel on the other side of 2, that will prove it

true." The right combination is "A" and "9." Only 4% did

this.

Turning over 9 could serve to prove the hypothesis false, people rarely

seek negative evidence.

CogLab: We'll look at our

data from the Wason selection task.

There is a way to make people do this task correctly. In fact,

I can present you with a problem that 100% of you will get correct, and

I could guarantee that even without providing you with all of the

information

you've already seen. The rule is: If you're under 21 then

you

can't be drinking alcohol. Here are four people at a party:

|

Bob

18 yrs. old

|

Carol

drinking a Coke

|

Jerry

43 yrs. old

|

Emily

drinking beer

|

You're the vice squad. If you know an age, you can check the

beverage. If you know the beverage, you can check the age (the

beverages

are exactly as listed, with no secret ingredients). Who do you

need

to check to see if the rule is being followed at this party?

Everyone can tell immediately that it's Bob and Emily. Why is

this so easy when the other problems are so hard? This problem

has

a context. Additionally, this problem involves detecting

cheaters.

As we'll see in the last unit, there's a reason from evolutionary

psychology

for why we should be particularly good at problems that involve

detecting

cheaters (even if we stink at all of the rest of the problems).

That

might make a nice reaction paper discussion.

One last bit for this. Confirmation bias can lead to all sorts

of problems. Some examples of how it can lead research awry are

in

my methods

notes. In the real world, confirmation bias can lead to the

development

and maintenance of prejudices. In fact, the interaction between

confirmation

bias and the rules we discuss below can make it almost impossible to

overcome

prejudicial thinking. For example, a lot of the early research on

gender neutral pronouns demonstrated that when he was used as a

generic pronoun it actually led to thoughts of men. This was true

even when the person being described was in a stereotypically feminine

profession (for example, "if a schoolteacher wants to get ahead he

should

be friendly to parents") (MacKay & Fulkerson, 1979). One

problem

this causes is that it plays into people's confirmation biases in

situations

where a pre-existing stereotype exists (engineer..he confirms people's

expectations). When you combine that with the fact that she

has often been used as the generic pronoun for stereotypically feminine

professions (like secretary) it can make it very hard to change

people's

stereotypes. If that translates into behavior ("we need to hire a

new girl for the office" biases against men and "let's get the best man

for the job" biases against women) it could be a problem. Add

this

bias for information that fits with the confirmation bias to the

heuristics

below, and there could be more trouble.

Top

IV. Heuristics. Outside of logic, what other

thinking

generalities can we uncover? There are two basic ways to work out

the solutions to problems. the algorithmic method is to use a set

of rules to methodically arrive at a conclusion. The best way to

see this is to multiply 365 by 48. You go step by step through

the

problem applying the rules for multiplication. You'll always

arrive

at the right answer (provided your math is correct).

Unfortunately,

this is impractical in a lot of real-world tasks. Instead, people

tend to rely on a set of rough-and-ready rules that can apply to a

variety

of situations. These rules are called heuristics. Provided

that the information going into the application of a heuristic is

correct,

the shortcuts will produce relatively sound answers.

Unfortunately,

the information going into heuristics is frequently incorrect (often

due

to confirmation biases), making the results suspect as well.

Let's

consider some examples of heuristics (I'm also lumping in other

characteristic

modes of thinking).

A. Representativeness: The more something resembles your

prototype of its population, the more likely you are to assign it to

that

population. This can lead you to make some errors in

reasoning.

A simple example from the book: Which of these sequences is more

likely to have been produced by flipping a coin six times in a row,

HHHTTT

or HHTHTT? Most people choose the second one, but they're equally

likely. People choose one on the basis of which one looks more

random,

not on the basis of probability.

This example is related to a tip that will help you really appreciate

the odds of winning the lottery. Imagine playing 1, 2, 3, 4, 5,

6.

Does that seem like a really unlikely combination? If you think

to

yourself "there's no way that combination could win," you've got a

chance

to save yourself some money playing the lottery. All combinations

are equally likely. For example, in the UK lottery with 49

numbers

and you pick six, there are 13,983,816 combinations, each has a

1/13,983,816

chance of winning. If you think choosing a sequence that looks

more

random increases your chances, you're fooling yourself. Whenever

I play keno (which I do for the fun of tormenting my fellow players and

not from the expectation that I'll win), I like to choose "unlikely"

looking

sequences. It makes people crazy to see me throwing my money

away.

All the while they're trying to come up with a "random" sequence that

has

a better chance (or falling back on a belief in magic and trying to

read

the keno vibes in the air).

Here are some tips based on other people's faulty thinking that will

help you maximize your expected value in a lottery play (which is not

entirely

dependent on the odds). The idea is to pick numbers nobody else

would

pick so if you should be so fortunate as to win you won't share your

prize.

First, a lot of people think of 1, 2, 3, 4, 5, 6 as a good choice

because

"nobody else will think of it." It's representative of a choice

nobody

will play. Therefore, it's a bad choice to make. Another

strategy

that's representative of "numbers other people don't pick" is to choose

all numbers higher than 31 (numbers under 32 are possible birthdays,

which

a lot of people play). But, since a lot of people do this, and

there

are usually fewer numbers over 31, you're actually hurting

yourself.

One last thing (it's not really representativeness, but it's related to

the lottery): Avoid any strategy with "due" numbers (as in "42

hasn't

come up in 10 draws so it's due"). If there's any possible bias

in

the system it's that a slight physical defect in one of the balls or

the

mechanism helps or hurts a particular number. If 42 comes up 10

draws

in a row, there's a reasonable chance that the 42 ball is defective and

more likely to be picked, so go with it. If it never comes up,

the

chances are it's defective the other way, so you would be insane to

pick

it because it's "due." Your best case scenario when a ball never

comes up is that it's just a result of random chance, which is the way

it's supposed to work anyway. (Lottery information from Eastaway,

R., & Wyndham, J., 1998, Why do buses come in threes: The

hidden

mathematics of everyday life).

The representativeness heuristic can be a large factor in perpetuating

stereotypes. Combine it with confirmation biases and you will

notice

examples which are representative of the stereotype, store those away,

and use them for further processing. Think about astrology.

Cancers (like me) are supposed to be moody and crabby. If you

know

this, you might take note of my behavior when it seems representative

of

the cancer stereotype, and thereby reinforce your belief in the

accuracy

of astrology. Behavior in non-cancers that is representative of

cancers

is less likely to be noted, and so unlikely to shake your belief in

astrology.

This is one of the problems with heuristics. Once a little bias

gets

into the system, the results aren't likely to be very successful.

B. Availability: If I ask you how likely something is,

you try to think of an example. The easier it is to think of an

example,

the more likely you say it is. For example, what's the

probability

of a batter reaching first base by getting a hit? By having the

catcher

drop a third strike? You should feel availability taking over by

trying to think of an example of each and using the ease of getting an

example to make your estimate.

Your book's example: Are there more words in English that start

with 'k' or that have 'k' as the third letter? You'll start

trying

to think of words, and conclude that starting with 'k' is more

common.

That's because you organize your mental lexicon around first letters

and

not third letters. So, it's easier to search that way and you get

more results. As you probably guessed, there are more with 'k' in

the third letter. Anything that affects the ease with which you

think

of something will affect availability (maybe as an exercise you should

try thinking of influences now):

1. Frequency: More frequent = more available. Again,

if you put in confirmation biases then what you encode more frequently

may not reflect the population, making your estimate inaccurate.

2. Familiarity: More familiar stuff is easier to recall

and so more available.

3. Vividness: More vivid stuff is easier to recall, so

more available. This is one reason why flying seems more

dangerous

than driving. Vivid plane crash stories are easy to retrieve, so

it seems like a crash is more likely (also plane crashes get more

coverage,

so frequency and familiarity come into play).

4. Recency: More recent stuff is easier to retrieve.

Demonstration: I have a powerful example of the way

availability

can make something really simple seem remarkable.

C. The simulation heuristic: Ease of simulation will affect

people's judgments. The example in your book describes two men

who

are on flights at the same time, are equally delayed getting to the

airport

(in the same car), and both arrive 1/2 hour after their planes were

supposed

to leave. However, when they arrive they find that Mr. Crane's

flight

left on time, but Mr. Tees' flight was delayed, and left just five

minutes

before they arrived. Who's more annoyed? Most people think

Mr. Tees is more annoyed. The reason is that it's easier to

simulate

how they could have been just five minutes earlier than a half hour

earlier.

Some influences:

1. Undoing: When people simulate undoing an event, it's

more common for them to file down an unusual detail to make it more

typical

(called a downhill change) than it is to add a new detail (an uphill

change).

It's easier to make an event more like the typical script than to make

it less like that script.

2. Hindsight: Once you know the outcome, it's easier to

simulate how that outcome happened. That makes it seem more

likely,

and can even make you change how you remember your predictions.

For

example, if someone on a game show decides to go for it and misses, you

might re-simulate and decide that it was obvious they should have

stayed.

Demonstration: I have a few simulation examples.

D. And the rest: Here are some more influences on thinking.

1. Anchoring and adjustment. Do the multiplication problems

in the demonstration.

Demonstration: There will be a multiplication problem

to perform, but you will only have a short amount of time to do

it.

One half do the first problem. The other half do the second

problem.

Compare answers.

Another: You get one of two lotteries. The first is to

draw a red marble from a bag with 50% red and 50% white. The

second

is to draw seven reds in a row from a bag with 90% red and 10% white

(we'll

replace the marble drawn each time to keep the odds the same on each

draw).

Which gives you the best chance of winning?

Anchoring and adjustment is related to the fact that people start from

the first part of the problem (the anchor) and then make adjustments

from

that when heuristics take over. If the anchor is low, people tend

to guess low. If the anchor is high, people tend to guess high.

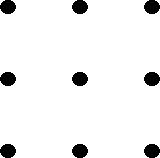

2. Set/fixedness: We have a lot of experience with the

world, and this usually influences our behavior, even when it's better

if it doesn't. Set: An example of this is the classic 9-dot

problem. If you ask people to solve it, they usually get stuck

because

they don't think outside of their set, but that's what the solution

requires

Fixedness: We come up with a solution that works and keep

applying

it in the face of simpler solutions.

Functional fixedness: We can't stop thinking of an object in

its usual function, and that hinders finding the solution.

Demonstration: One for each above.

For set, connect the nine dots below by drawing four straight lines.

For fixedness your job is to do some jug problems. Basically,

you're given three jugs of various sizes and you're supposed to get a

particular

amount using those jugs. Solve each of the problems in the table

below (from Myers, 1995):

| Problem |

Jug A |

Jug B |

Jug C |

Target Amount |

| 1 |

21 |

127 |

3 |

100 |

| 2 |

14 |

46 |

5 |

22 |

| 3 |

18 |

43 |

10 |

5 |

| 4 |

7 |

42 |

6 |

23 |

| 5 |

20 |

57 |

4 |

29 |

| 6 |

23 |

49 |

3 |

20 |

| 7 |

15 |

39 |

3 |

18 |

For functional fixedness imagine this situation: You're in a

plane and it crashes in the desert. You're miles from help, and

you

have no food and water. All you have is a parachute, a pocket

mirror,

a compass, and a map. What's your most important asset?

3. Confidence: We usually have very poor calibration of

comprehension (knowledge about how well we did on a task). This

applies

to decisions as well. You can test that by answering this

question:

“Hirsute probably means ‘really hairy’ or ‘habitually late.’”

(Choose

one.) Generally, people are more confident of their answers to

these

questions than they are correct. The more confident, the more

they

overestimate their ability.

4. Belief: If I get you to state a belief first, it’s a

lot harder to get you to change your mind. So, if I say to you

“it’s

much safer to be in the back of a plane if there’s a crash, why do you

think that is?,” you can probably make something up to account for

it.

Then, if I give you opposite evidence, it will be really hard to

persuade

you to change your mind. Better yet, if I tell you that my

evidence

was just made up, you’ll still cling to your incorrect belief based on

that information.

5. Framing: How you ask the question will impact how people

feel about it. So, if I tell you that 10% of people who eat a

particular

kind of Sushi keel over and die, or I tell you 90% come through

unharmed,

you’ll think it’s more dangerous in the 10% die case (I’ve framed the

problem

negatively).

CogLab: We'll look at our

results from the decision making demonstration.

Top

V. Probability. One last thinking topic has to do

with people's generally poor comprehension of the laws of

probability.

This generally leads to a lot of thinking errors. (Most of this

came

from an excellent book called Using Statistical Reasoning in Everyday

Life.

The book will be published by Wadsworth this summer.

Unfortunately,

I don't currently know the author's name. If you've ever wondered

why you need to know anything about statistics, this book would be an

excellent

choice. What is below is just a taste.)

CogLab: We'll talk about

the Monty Hall problem as an interesting example of probability.

A. Conjunction. How do you combine the probabilities of

a bunch of independent events? Try the example in the

demonstration.

Demonstration: Estimate your likelihood of avoiding all

of the causes of death on the overhead.

Generally, people overestimate. Even though each number is high

(the odds of avoiding a car accident are 99%), their conjunction is not

so high. To compute correctly, multiply all together. Note

how anchoring and adjustment played into your estimate. Others

might

also have been involved. The lack of ability to correctly predict

the probability of conjunctions can have implications for real

life.

For example, there's a 90% chance I'll finish the paper on time, a 90%

chance I'll finish the take-home test, and a 90% chance I'll get my

chores

done, what's the chance of getting all three done? (It's not

90%.)

B. Conjunction fallacy. Read about Mr. F in the

demonstration

below.

Demonstration: Which fact about Mr. F seems more

likely?

Try Linda the bank teller.

Basically, the conjunction between two events has to be smaller than

the likelihood of either alone (or at the extreme the same size).

Most people use heuristics to estimate conjunctions (like

representativeness

for Mr. F) and overestimate the conjunction. Use a Venn diagram

to

illustrate this. We can also consider the story about

Linda.

You know the rule now, but 85% of the people reading about Linda went

for

the conjunction fallacy. Why?

CogLab: We'll look at the

results from our typical reasoning demonstration.

C. The power of chance. Now that you know the rule, try

the stockbroker example.

Demonstration: What are the odds of picking the direction

of change in a stock price 10 weeks in a row?

If you use the correct conjunction rule, you might say the odds aren't

too high. But, if you imagine that the stock pickers are just

flipping

a coin, the odds are that one of them will get all 10 right. In

other

words, it's very likely to happen just by chance. The odds of one

particular person getting it are low, the odds of someone

getting

it are not. It's sort of like the lottery. Your odds aren't

so great. But, the odds that someone will win are pretty

high.

In other words, just because something is very improbable doesn't mean

nobody will achieve it if enough people are in the game. I guess

the message is not to be too impressed with something that could have

happened

just by chance.

D. Amazing coincidences. The example above is related to

amazing coincidences (like dreaming about being in a car crash and then

crashing). Or, the eerie similarities between identical twins

raised

apart. Read the description in the demonstration.

Demonstration: Description of the similarities between

two women.

These women aren't twins. They were randomly paired in a study

by Wyatt, Posey, Welker, and Seamonds (1984). They found that

random

pairs also produced a bunch of similarities when they were compared on

a lot of dimensions. The point is that the odds of a particular

coincidence

(like political leanings) might be low, but the odds of some

coincidence

out of the millions of possibilities are actually quite high.

It's

not surprising if twins reared apart are similar in some way.

You could also relate this to fortune telling (segue back to Eastaway

and Wyndham, 1998). It would be impressive if a fortune-teller

made

a very specific prediction that came true ("you will meet an old friend

on the street this week"). If a general prediction came true

("something

interesting will happen this week"), that's not impressive. It's

especially not impressive given the heuristics and confirmation bias

discussed

above. Let's consider a prediction. In a room with 23

people,

I predict two will have the same birthday. If I'm proven correct,

is that impressive? Naive intuition might think it is (with 365

days

to choose from, the odds of two out of 23 having the same birthday seem

low). However, the odds are actually 51%. You can calculate

them by using the conjunction rule. Eastaway and Wyndham show

it's

easier to do this by calculating the odds of not having two birthdays

the

same. For 23, it's 365/365 X 364/365 X 363/365 X ... X

343/365.

That's 49%. So, there's a 51% chance of two birthdays being the

same.

What would be more impressive is if I picked two people and said they

would

have the same birthday. Then my odds are 1/365, which is a much

more

difficult prediction to get right by accident. So, even though

the

situation feels similar, one is actually a lot more likely.

I mentioned the heuristics above, how do they come into play?

They help you take note of the amazing things that do happen and

disregard

the amazing things that don't happen. They also help to determine

what counts as amazing. Eastaway and Wyndham (1998) point out

that

the odds of George Washington being born on February 22 and Queen

Victoria

being born on May 24 are 1 in 130,000. In other words, those two

birthdays happening are very unlikely. But, it's not an

impressive

coincidence because you attach no significance to it. A lot more

on coincidences can be found at Skeptical

Inquirer. I think the section on Lincoln/Kennedy coincidences

is particularly relevant for the discussion in this section.

E. Certainty. It's possible to know with relative certainty

what someone will do in a situation. This can be based on common

reasoning errors and the heuristics above. If people are relying

on intuition to interpret how remarkable events are, then they will be

easily tricked.

Demonstration: I have a math problem for you to try.

I think you will all get the same sum, which I will tell you once

you've

had a chance to do the problem.

There's a common mistake that leads to this result. This one's

not 100% certain, but it's very likely. You're probably not too

impressed,

however. Let me do some magic tricks.

Demonstration: I have a couple of magic tricks.

Were these impressive? I won't spoil the show by telling you

how they're done, I'll just let you know that they absolutely had to

work

out. My point is this: It shouldn't impress you when

someone

does something that had to come out the way it did. Not having an

appreciation of math (for my magic) or psychology (for most of the rest

of this unit) can lead you to be overly impressed. If there's

time,

I'll wrap up with a very impressive magic trick relying entirely on

psychology.

Let's see if you can figure it out.

Top

Cognitive Psychology Notes 13

Will Langston

Back to Langston's Cognitive Psychology

Page